History has a way of rhyming, they say.

Well, if it does, it surely does so in the project economy, too. And if that's true, we might as well study it, extract the learnings, and apply them to today's project-economy startups.

Which brings me to this article: it's the first in a series that examines historical parallels, pulling lessons from past infrastructure waves, tech companies and industrial conglomerates, historical events. Successes and failures.

The goal is straightforward: help founders building in the project economy recognize patterns before they become obvious, inspire new founders, or, at minimum, provide some entertainment value while you navigate your own challenges.

This first piece is inspired by a concept I came across some time ago, called "commoditizing your complement". Carl Shapiro and Hal Varian came up with the economic theory, but Joel Spolsky made it famous in tech circles. It's been sitting at the back of my mind ever since.

Then, recently, upon reflecting on which AEC SaaS companies have actually built defensible businesses, this framework came back to mind. I thought of sharing it with you as an interesting first piece, so here we are!

Making your neighbor cheap

Before we start, let me share some definitions with you:

A substitute is a product a consumer might buy if the primary product becomes too expensive (e.g. chicken to beef), whereas a complement is a product usually purchased in tandem with another (e.g. gasoline for cars, OS for computer/smartphone hardware, etc.).

Alright. Now, looking back at history, in many cases, the winners in tech have been the companies that understood how to shape the entire ecosystem around their offerings (and obviously had a great product, too!).

Now, one of the most powerful ways to do this is to deliberately drive the products adjacent to yours (your complements) toward commodity status. The underlying economic principle is straightforward: when something becomes cheaper and more abundant, people use/buy more of it. But in multi-layered technology stacks, this creates a fascinating dynamic: by making the products that sit alongside yours inexpensive and ubiquitous, you can dramatically increase demand for whatever you're actually selling.

This approach works because technology markets rarely distribute value evenly across different layers. In any given stack, whether it's hardware and software, infrastructure and applications, or browsers and search engines, some layers capture most of the economic surplus while others become brutally price-competitive. If you hold a strong position in one layer and can deliberately push an adjacent layer toward commodity economics, you essentially expand your total addressable market.

The mechanism behind this is tied to how technology products create value through interaction and network effects: most digital products become more valuable as more people use them, but if a necessary adjacent product is expensive or scarce, that potential value never fully materializes. By making that adjacent layer cheap and widely available, you remove friction that would otherwise suppress adoption. The company pursuing this strategy doesn't necessarily profit directly from the commoditized layer but it rather captures the bulk of the economic value at the layer where it maintains differentiation and control.

Too theoretical? Let's have a look at some examples.

Microsoft's personal computer strategy in the 1990s shows this exact dynamic at play. Microsoft's core product was the Windows operating system, while the complement was the physical computer hardware itself. Rather than building its own machines or partnering exclusively with a single manufacturer, Microsoft licensed Windows non-exclusively to anyone who wanted to make PCs: this decision triggered fierce competition among hardware manufacturers like Compaq, Dell, and HP, who raced to undercut each other on price while producing increasingly capable machines. The result was the rapid commoditization of PC hardware: computers became dramatically cheaper and more accessible to consumers and businesses alike.

And guess what? This commoditization of hardware had a direct and powerful effect on Microsoft's core business. As PC prices fell, the total cost of computing dropped, and millions more people could afford to buy computers. But every one of those computers needed an operating system, and Windows was the de facto standard. While hardware manufacturers fought over razor-thin margins on physical machines, Microsoft collected licensing fees from every unit sold, regardless of who made it. The company essentially orchestrated an environment where its complement became abundant and price-competitive, while its own product remained the indispensable bottleneck through which value flowed. Deciding where scarcity should exist in the stack ultimately turned Windows into one of the most profitable software products in history.

The pattern Microsoft established wasn't simply about being open or generous with licensing. It was about understanding that in a system of interdependent products, value doesn't naturally accumulate where it's created: it accumulates where you can exercise control. Therefore, by ensuring that hardware remained fiercely competitive and low-margin, Microsoft guaranteed that no single hardware vendor could become powerful enough to dictate terms or fragment the platform. The operating system layer, by contrast, benefited from strong network effects and switching costs that created a durable moat.

This strategic logic reappeared decades later when Google launched the Chrome browser in 2008. At that point, Google's business model depended entirely on people using the internet to search for information and click on ads. The web browser was a complement to Google's core search business: people needed a browser to reach Google's search engine. But in the mid-2000s, Microsoft's Internet Explorer dominated the browser market, and its slow development pace and poor performance were arguably holding back the entire web.

Google's response was to build its own browser and then deliberately commoditize it by making the underlying Chromium project open-source and free. The company invested heavily in developing a faster, more stable browsing experience and then gave away both the finished product and its technical foundation. By ensuring that the browser layer became fast, capable, and widely accessible, Google removed potential bottlenecks that could constrain web usage. Internal Google testimony later revealed the quantifiable impact: users who switched from Internet Explorer to Chrome performed nearly fifty percent more searches, while those switching from Firefox performed about a quarter more. By commoditizing the browser, Google effectively increased the velocity and volume of web activity, which directly fed into search volume and advertising revenue.

The Chrome strategy also illustrates another dimension of commoditizing complements: preventing competitors from controlling your access to customers. Had Microsoft successfully used Internet Explorer as a bottleneck, it could have steered users away from Google or degraded the search experience. By funding and distributing a superior alternative, Google ensured that no single competitor could establish a chokepoint between users and the web itself. The company gave away the complement to fortify its position in the layer where it actually captured value.

This pattern of strategic openness and calculated commoditization extends throughout technology history, though it manifests differently depending on the specific market structure. When companies contribute heavily to open-source projects, release free tools, or broadly license technologies, the underlying logic often follows this framework. The key question isn't whether to be open or closed in abstract terms, but rather where in the stack you need to maintain differentiation and where you "benefit from abundance".

Deciding what not to commoditize

Just as for many business strategies, commoditizing complements isn't a universal prescription that applies everywhere. It requires careful judgment about which boundaries to defend and which to open. You need confidence that collapsing prices in the adjacent layer genuinely funnels demand to your core rather than undermining your own strategic position. The distinction matters because what serves as a complement today might become a core differentiation point tomorrow as markets evolve and customer needs shift.

The strategic tension underlying every implementation of this approach centers on selectively engineering where scarcity exists in the ecosystem. You push openness outward (lowering barriers and reducing friction in areas that amplify your reach) while pulling control inward around the elements where your competitive edge resides: you want an environment where customers encounter abundant, inexpensive, interchangeable choices around you, yet find themselves gravitationally drawn back to the one piece of the stack you control.

At a fundamental level, commoditizing a complement only makes sense when that complement is not the primary source of differentiation for your business. If the complementary layer is something customers perceive as interchangeable (hardware vendors, generic content, etc.) then making it cheap, ubiquitous, or free can remove barriers to adoption and sharply expand demand for your core offering. But if the complement starts to encroach on your own unique value (e.g. if it becomes the very thing customers pay you for) then commoditizing it can erode your strategic advantage rather than reinforce it.

Consider how this boundary plays out in practice. A platform company might release open APIs, developer tools, and integration frameworks precisely because they're complements that expand the platform's utility. But that same company will typically retain proprietary control over core protocols, unique workflows, or shared user identity, which are elements that anchor customers to the platform and are difficult for competitors to replicate. The effect creates an ecosystem that appears open and welcoming, yet structurally channels value back to the platform owner.

When Netscape open-sourced its browser in the late 1990s, the goal wasn't fostering developer goodwill for its own sake, it was commoditizing the gateway to the internet to drive demand for the server software where Netscape captured margins. Similarly, when IBM invested heavily in Linux and open-source enterprise tools, it was reducing the total cost of ownership for consulting clients, which expanded the budget available for IBM's higher-margin services. These moves reveal a pattern: sophisticated companies don't reflexively protect every asset they create. Instead, they maximize the value of their core by ensuring surrounding layers remain cheap and interoperable.

Apple's App Store offers another angle on this dynamic: by making it easy and inexpensive for developers to distribute mobile applications, Apple effectively commoditized the software layer while capturing value through hardware sales. The abundance of cheap or free apps (due to developers competing intensely on price) made the iPhone itself far more valuable as a platform. Users came to expect that "there's an app for that" for virtually any need, which in turn drove device adoption and loyalty.

The enduring insight is that commoditizing complements is fundamentally about value engineering and market architecture. You're deliberately shaping where economic surplus accumulates in a technology stack, redistributing it away from layers you want to remain competitive and channeling it toward layers you control. This requires understanding not just your own product but the entire system in which it operates, recognizing which dependencies drive demand for your offering and which represent potential points of competitor leverage.

Done well, this strategy creates an ecosystem where the complement becomes abundant and interchangeable, while your platform or product becomes the anchor, e.g. the place where customer loyalty and economic value concentrate. The openness around you makes the entire system more accessible and attractive, while the control within you ensures you capture the returns. That tension between openness and control, between commoditization and differentiation, is what transforms a clever tactical move into a durable strategic advantage.

Does this work in AEC?

Now, is this concept relevant to founders in AEC-tech (or the project-based economy, more in general)? Of course, otherwise I would not be writing about it.

So let me share with you why (and its caveats/nuances).

I think it's clear that many of the (emerged and emerging) winners in AEC-tech (speaking here specifically for the software market) follow a similar model: they are data infrastructure platforms that serve as the connective tissue between fragmented stakeholders and disparate systems, and occupy a strategic position analogous to operating systems in traditional software markets. At the same time, they face a crucial architectural decision that will determine whether they build durable moats or get outflanked by more flexible competitors: should they attempt to own and develop every application feature themselves, or should they deliberately foster an ecosystem where third-party developers and AI applications compete to provide those features?

The answer increasingly points toward ecosystem openness. Well, for most players, at least ;). The strategic logic mirrors the commoditizing complements framework shared earlier: by making the application layer abundant and competitive, infrastructure platforms increase the indispensability of the underlying data layer they control (remember: in multi-layered technology stacks, value concentrates where you have structural control and diffuses where you encourage competition).

I know, sounds theoretical again! So let me share an example.

Procore Technologies has embraced this model explicitly through its platform-centric strategy. The company operates an app marketplace that has grown to encompass over five hundred integrations with independent software vendors as of late 2025. Rather than jealously guarding every potential feature, Procore opened its APIs and invited external developers to build specialized solutions on top of its core infrastructure. The result for customers? Project efficiency gains approaching twenty percent and delay reductions around thirty percent. The result for Procore? These outcomes directly reinforce Procore's customer retention metrics and perception of the value of the product, which exceed ninety-five percent on a gross revenue basis.

By allowing specialized applications to flourish around its core platform, Procore ensures that when construction firms need niche functionality, there's almost certainly a solution available in the marketplace: the platform becomes stickier not despite this openness but because of it! Customers perceive Procore as the central operating system that connects their entire toolkit, making it dramatically harder to switch away even if a competing platform offers better core features.

The economic mechanism at work here is that Procore has effectively outsourced "innovation risk" (to some extent) to its developer ecosystem. When an independent software vendor builds a specialized application that fails to find product-market fit, Procore's core infrastructure remains unaffected. But when a developer creates something genuinely valuable, Procore's platform becomes more essential to end users. The company captures value through platform subscription fees and the gravitational pull created by ecosystem lock-in, rather than trying to monetize each individual application feature. This mirrors how Microsoft profited from Windows dominance while PC peripheral makers competed fiercely, or how Google captured search advertising revenue while browser technology became commoditized.

Procore does maintain boundaries around this openness, though. The company's developer policies explicitly prohibit third-party applications from replicating core platform functionality that would directly compete with Procore's primary offerings. Still, Procore provides sandbox environments and extensive API access for applications addressing hyper-fragmented problems the company itself cannot efficiently solve, while maintaining control over the foundational project management capabilities that define its market position.

All of this brings me to the following point: the ultimate prize is becoming the authoritative source of project intelligence/data that every application must interface with, regardless of which specific tools customers use for particular tasks. You want to focus on value capture at the infrastructure layer while deliberately making the application layer competitive and abundant. This is important, and deserves a section on its own.

Which layers actually matter in AEC-tech

(Before we proceed with the next two sections, I want to remind you that the following perspectives are mine and, as such, not absolute truths. Also, not everyone will agree)

What follows from what we've said so far is that if you're building in AEC software and you want a shot at capturing disproportionate value, you should be opinionated about where value structurally accumulates in the stack. The winning layers are the ones that define the grammar of work: the system of record where project truth lives, the data infrastructure that moves that truth across stakeholders, and the authoring tools where that truth is first created. Why? Because these layers sell software and they set the terms by which other software must exist: everything above them (plugins, automation scripts, niche vertical add-ons, AI agents, etc.) can be useful, even beloved, yet still remain economically fragile, because they don't control the substrate. They are complements, and complements can be flooded.

This is the uncomfortable lesson from CAD/BIM incumbents. The plugin economy looks vibrant from the outside, but the center of gravity barely moves: the typical add-on rarely owns durable data, rarely becomes the system of record, and rarely accumulates long-lived switching costs on its own. It lives on borrowed distribution (the host platform's user base), borrowed legitimacy (the host platform's file format), and borrowed continuity (the host platform's APIs and release cycle). Even when a plugin becomes "must-have" inside an office, it tends to be "must-have for Revit" or "must-have for Rhino", which is a subtle but decisive distinction: the dependency points inward. That's why, in practice, the ecosystem ends up with dozens (or more) of near-substitutes. If one vendor raises prices, another appears. If one stalls, firms rebuild the workflow in-house. The complement is interchangeable precisely because it doesn't own the rails.

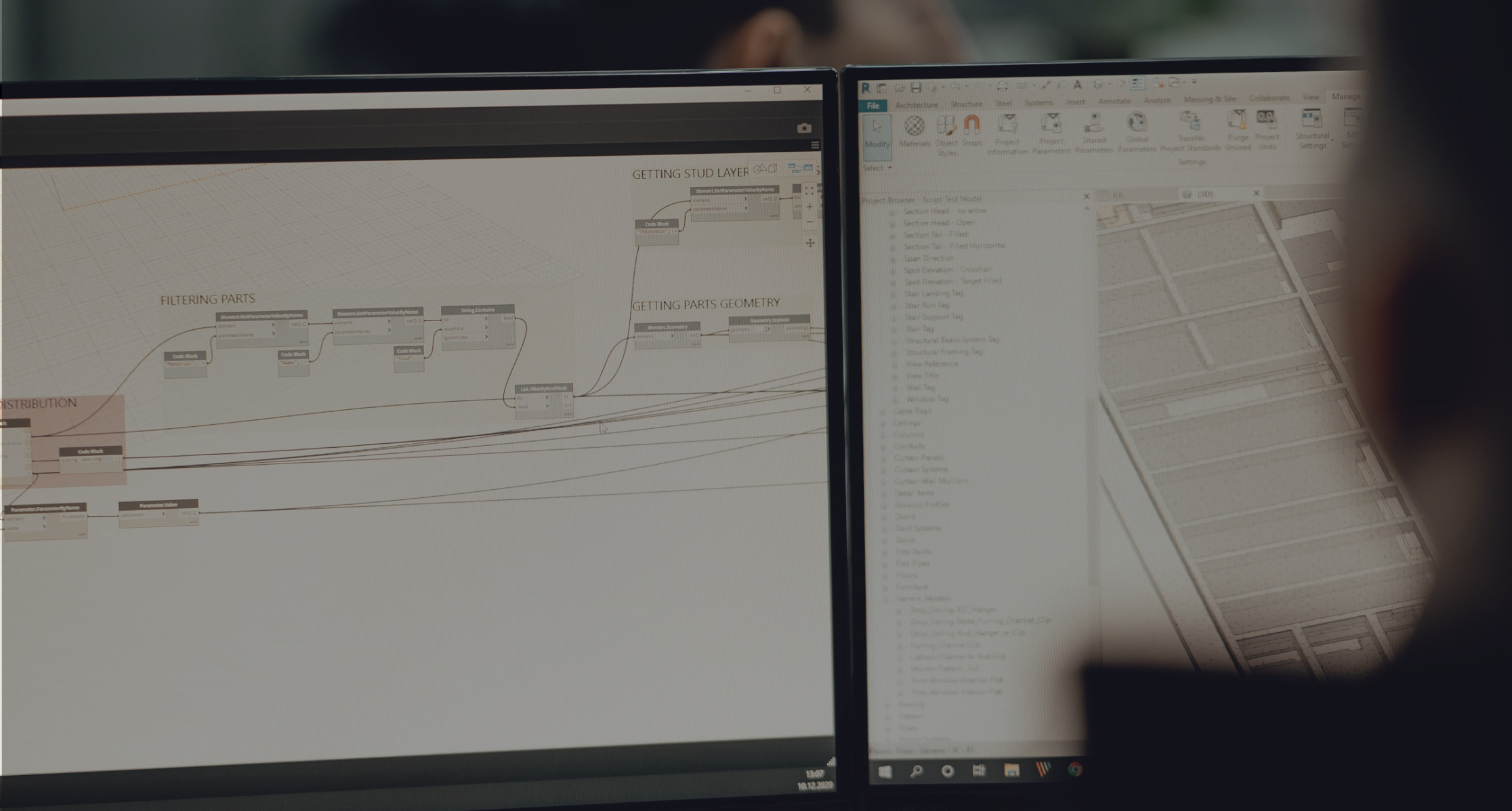

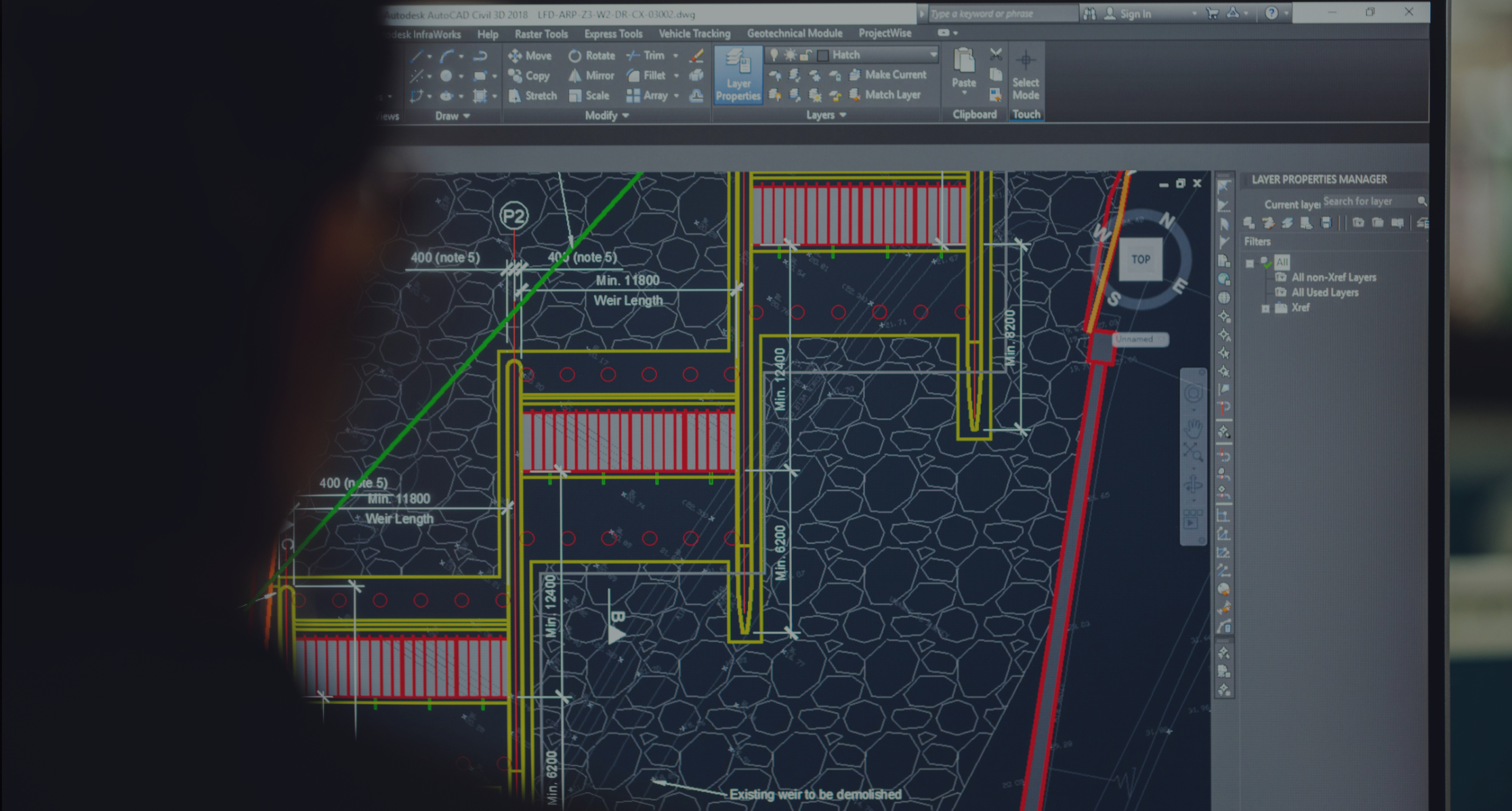

Autodesk's flagship BIM application, Revit, is a case in point. Revit has a rich API and thousands of plugins created by third-party developers to extend its functionality, e.g. for structural analysis, environmental simulation, drawing automation, and now AI-driven generative design. Autodesk's interest is in selling Revit subscriptions, not in nickel-and-diming customers for every add-on. Thus, it has embraced a thriving plugin ecosystem, even hosting an Autodesk App Store with many free or inexpensive extensions. Critically, Autodesk commoditized the generative design complement by providing its own visual programming tool, Dynamo, at no extra charge. Dynamo, which allows designers to algorithmically generate and manipulate geometry, was released as open-source software and is bundled free with Revit. This move was highly strategic: a few years ago, generative design was the hot new complement to BIM modeling, with alternate solutions like Grasshopper (for Rhino) gaining popularity. By making Dynamo ubiquitous and open, Autodesk ensured Revit users didn't need to pay another vendor for generative design, since they got it "for free" with the platform. Today, Dynamo is an integral part of Revit and has its own community of script developers, all feeding back into Revit's value. In essence, Autodesk sacrificed the opportunity to sell a generative design product (in fact, Dynamo was briefly offered as a paid standalone called Project Refinery, but that approach was shelved) in order to bolster Revit's attractiveness. The strategy worked: Revit remains the industry-standard BIM tool, partly because it's surrounded by a cornucopia of plugins and scripts that cost users very little. Autodesk still captures value through hefty Revit subscription fees and cloud service revenues, not by charging for each plugin or Dynamo itself. By commoditizing the application layer (plugins, AI assistants, etc.), Autodesk preserves its core stronghold. The result is a thriving market of parameter tools, model health checkers, sheet automation, content managers, yet none of these categories capture anything close to the value captured by the core authoring environment. And crucially, the dependency still resolves back to the native model. That is the choke point. (And when interoperability threatens to become a true escape hatch, you see the defensive posture immediately: export may exist, but "round-tripping" reality often doesn't)

Rhino shows a similar approach. Grasshopper turned the authoring environment into an algorithmic playground and catalyzed an explosion of community tooling: simulations, optimizations, fabrication workflows, analysis utilities, niche geometry operations. The important economic move is that this explosion didn't create a single dominant middleware tollbooth, it created a marketplace: hundreds of small tools compete, many are cheap, some are free, and almost all of them deepen the user's dependence on the base platform because the accumulated scripts, definitions, and even "office lore" live in Rhino's orbit. Even where McNeel appears unusually open (making it easier for others to read and write the .3dm world), it doesn't eliminate the underlying authoring gravity. It expands Rhino's reach and makes Rhino-compatible workflows feel safer, because compatibility is less brittle. That openness commoditizes friction, not the center.

The approach followed by both companies creates powerful network effects and switching costs that compound over time. Users don't just adopt Revit or Rhino as isolated tools: they build entire workflows around them, accumulate libraries of scripts and plugins, and develop organizational knowledge about how to extend the platforms. Moving to a competing system means abandoning this accumulated investment and rebuilding capabilities from scratch. But the more abundant and sophisticated the plugin ecosystem becomes, the higher these switching costs climb.

Moreover, by encouraging a competitive ecosystem for complementary tools, the authoring platform owner offloads research and development burden while simultaneously increasing user lock-in. When Autodesk releases a major Revit update that breaks plugin compatibility, third-party developers bear the cost of fixing their tools, not Autodesk, and still, the user's dependence on the Revit ecosystem remains fundamentally intact. This is the practical application of the commoditize-your-complement framework at the application layer: minimize direct control over peripheral functionality to maximize the value and defensibility of the core technology. Moreover, by encouraging third-party developers to build specialized solutions for these niches, platform owners avoid a complexity trap (namely, the exponential increase in maintenance costs and technical debt a company faces when a system attempts to handle too much domain-specific logic internally). The authoring tool remains focused on its core competency (providing robust geometric modeling and data management) while specialized providers handle the domain expertise needed for particular workflows. This division of labor allows the ecosystem to scale across use cases that would be prohibitively complex for a single vendor to support directly. Each successful plugin makes the underlying platform more valuable without adding technical debt to the core codebase.

So the founder takeaway for me is quite blunt: if you're building "a plugin", an "AI agent", a point solution, etc., sitting on top of authoring tools, SoR, ERPs, data infra, you should assume you are volunteering to be commoditized, either by a swarm of substitutes, or by the platform owner the moment the category matters enough. The thing is: the platform doesn't even need to copy you perfectly. Just bundling 70-80% of your functionality for "free" inside the subscription is often enough to collapse your pricing power. You only escape that trap if you move down-stack: become the system of record, become the data backbone, become the authoring surface, or at least own a durable proprietary dataset and workflow loop that the platform cannot trivially internalize. Because the only companies that reliably get to set commodity conditions are the ones that own the layer everyone else must plug into.

In AEC, those are the layers that define the file, the model, the truth, and the transaction/interoperability boundaries, not the layers that decorate them.

Building at the layer that matters (an investor's lens)

To conclude, from an investor's (specifically: mine) perspective, the most important question is not whether a founder is "AI-native" or whether their workflow feels modern in the AI age, but whether they are building at a layer that can set the rules for everyone else.

In AEC software, there are only a few such layers: systems of record (ERP-like systems that own commercial truth), data infrastructure (the pipes that move models, issues, and metadata across stakeholders), and authoring tools (the surfaces where the model is created and edited). These layers don't just solve tasks, but define the canonical representation of work: that definition is the real moat. It is why these businesses can commoditize everything above them (plugins, add-ons, copilots, point solutions): because the platform can always decide that a feature category should become abundant, bundled, open, or "good enough".

The uncomfortable truth is that these categories are also the hardest to build, and this difficulty is precisely the point!

A system of record is sticky because it becomes the place where contracts, costs, approvals, and accountability live: once embedded, it isn't just some swapable "software", it's the firm's operating memory. An authoring tool is sticky because it is both interface and engine: it owns the data model, the geometry rules, the parametric relationships, and the workflows teams have spent years institutionalizing. Displacing these incumbents requires more than a nicer UI or a faster feature: you need to survive the long march of feature parity, migration tooling, standards alignment, enterprise security posture, change management, and (most painfully) retraining the people whose job is to produce work, not to beta test new tools. The go-to-market is slower, the customer education burden is heavier, and the path to monetization is longer because you must earn trust at the point where mistakes are expensive and reputation is fragile.

Yet that same difficulty shapes the competitive response function in your favor. When you attack a system of record or an authoring layer in AEC and you begin to win, you do not trigger a typical SaaS "knife fight" where ten competitors can clone each other. You trigger a different pattern: incumbents can defend with distribution and bundling, but they are structurally slow at rebuilding their own foundations. Their codebases are old and their roadmaps are negotiated with thousands of existing deployments that cannot break. The closer you get to being "the new center", the more acquisition (for the incumbent, to you) becomes the rational move, because copying becomes a platform rewrite, and rewrites are where large software companies go to die. This is why the ceiling on these layers is so high: if you truly become the new record or the new authoring surface, value capture is not merely incremental!

Now compare that to building "on top", e.g. the AI agent that sits above Revit, the plugin that automates BIM hygiene, the specialized checker that runs model QA, the optimization tool that suggests layouts, and so on. These products can be good businesses, and they can be acquisition magnets precisely because they are easy to plug into existing distribution. But they live under a permanent strategic shadow: the platform owns the customer relationship, owns the data, owns the interface, and can decide that your category should be bundled, standardized, or made free-ish to keep the ecosystem vibrant while protecting the core. The ceiling is therefore lower not because the product can't be great, but because the layer cannot accumulate compounding switching costs on its own.

This is why distribution becomes the optimization function for "on-top" companies. If you don't own the substrate, you must own the channel, or at least embed so deeply into the workflow that replacing you is socially and operationally painful. You win by being everywhere: by becoming the default plugin, the default automation, the default assistant, the default workflow template that spreads through communities faster than platform roadmaps move, etc. You win by making adoption obvious.

So, if you want maximal value capture, you bias toward systems of record, data infrastructure, and authoring layers even though they are slow, painful, and technically brutal, because they are the only layers that can reliably commoditize the rest and keep that privilege. If you build on top, you accept a different trade: faster shipping, faster distribution, often faster revenue, and a higher chance of becoming an acquisition target, but at the same time, you live with commoditization risk as a permanent tax on your outcome. In other words, platforms are hard to build and hard to displace, but that is exactly why they are worth building; complements are easier to build and easier to sell, but that is exactly why you must outrun the platform's ability to make you "just another feature".

Alright, time to bring this home.

Conclusions

Let's summarize.

In AEC software, the most valuable businesses aren't necessarily the most innovative or the most loved by users, but they are the ones that control the layers where everyone else must plug in: they are the systems of record, data infrastructure, and authoring tools. These are tools that define the terms by which every other solution in the ecosystem must operate. And once you control those terms, you earn the right to decide which adjacent categories should remain scarce and which should become abundant.

For founders, this creates a difficult choice. If you're building on top of someone else's platform, you're volunteering for a world where your pricing power can evaporate the moment your category matters enough for the platform to bundle a "good enough" version. You can still win by owning distribution, but you're playing a different game with a different ceiling.

If you're building the platform itself (the system of record, the data backbone, the authoring engine, etc.), then you're signing up for a longer, harder, more capital-intensive path. But that difficulty is precisely what creates the moat. And when you succeed, you don't just capture value from what you build: you capture value from the entire ecosystem that forms around you, because you're the one setting the rules.

The pattern is clear across technology history, and AEC is no exception.

If you're building in this space, reach out to us at Foundamental. We'd love to hear about it!

More pieces like this are coming, so don't forget to subscribe to my newsletter if you don't want to miss the next one!

More appetite for this type of content?

Subscribe to my newsletter for more "real-world" startups and industry insights - I post monthly!

Head to Foundamental's website, and check our "Perspectives" for more videos, podcasts, and articles on anything real-world and AEC.

.png)