What does a software engineering organization actually look like in the AI-native era, once you let go of the legacy mindsets, the legacy cultures, the routines that worked five years ago? We don't think anyone has it fully figured out, but we wanted to share what we've been observing across our portfolio and the operators we talk to most.

This Week On Practical Nerds - tl;dr

Why every company today must operate as an AI-native firm - but how

The case for separating your engineering organization into a "run the business" function and a dedicated "bet-taking factory"

Our bet on the rise of the commercial AI engineer: a STEM or CS-trained operator who spent most of their career in growth, RevOps, launcher, investing, or founder roles

Why traditional engineers in the range of 5 to 15 years of experience tend to struggle more in the AI transition, while engineers with 20-plus years and fresh graduates tend to deliver the highest ROI in AEC tech and beyond

How to hire the new class of head of engineering

🎧 Listen To This Practical Nerds Episode

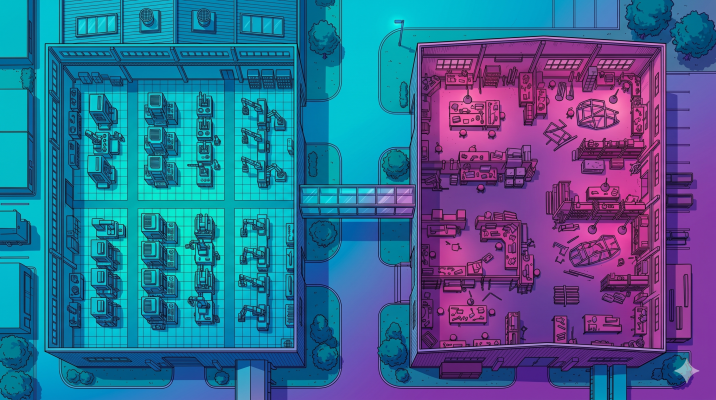

AI Native Orgs Separate “Run The Business” From “Transform The Business” Teams

There was a time when companies used to differentiate themselves by calling themselves "internet enabled" or "consumer internet" or "mobile app" companies. Shub and I are of a vintage that remembers that clearly. Today, we're seeing the same pattern repeat with the label "AI native firm." Our hypothesis is that just like there is no longer such a thing as a non-internet-enabled company being relevant, there will not be a place for a non-AI-native company either, especially if it's being built new today. Which means the entire organization, not just one team, has to operate with an AI native mindset.

But here is where most founders we speak to make the same mistake, in our observation. They assume that AI native means everyone in the organization is doing the same thing, just with AI on top. We don't think that's right. We think it actually requires you to do something quite uncomfortable, which is to literally split your organization into two functions and to separate engineering across both.

We've been seeing organizations ship at a really impressive clip when they distinguish between a run-the-business part and a transform-the-business part. The run-the-business side optimizes for reliability, dependability, steady cash flows, capital efficiency, labor cost efficiency. The transform-the-business side optimizes for taking bets, exploring new opportunities, opening up optionality. These are two completely different optimization parameters. And we have not yet seen a single human being or team handle both successfully when you ask them to do both at the same time.

The analogy I keep coming back to is venture capital. If you ask a VC to take bets, but at the same time ask the same VC to optimize the portfolio for steady cash flows, what happens? Target conflict. The same person ends up doing neither well. And that target conflict is exactly what plays out inside organizations every day. When you ask an engineering manager to make the existing platform more reliable while also asking them to ship a wild new bet that could break it, you're asking them to be schizophrenic. They can't do it.

In our view, this is a fundamental reason why so many unicorns we've watched over the years never become decacorns. Scaling and compounding are not the same thing. To compound, you need an opportunity that can absorb more optionality on the left and the right, but you also need an organization that continues to take bets while the existing business runs reliably. And that's hard. We think it's actually one of the more hidden mechanisms behind Clayton Christensen's innovator's dilemma. The same person being asked to deliver both ends up choosing the safer outcome, almost every time, because that's how human incentives work in incumbent contexts.

And let's be clear: incumbent isn't a synonym for old. Salesforce is an incumbent. Netflix is an incumbent. Plenty of technology companies that we still think of as innovators are now incumbents in their core categories. The trap of running the business eating the bet-taking is universal. It just shows up later in the lifecycle for some companies than for others.

In startups, founders often tell us, "Yeah, but we take bets all the time, that's not our problem." Initially, sure. But what happens when you actually succeed? What happens when you have a million customers depending on your product? You know what you have to build next to it? The boring, dependable thing. So even if you start out as a pure bet-taking organization, the moment you get traction, run-the-business becomes a real function that someone has to own. And if you don't separate it from your bet-taking on purpose, run-the-business will quietly absorb the bet-taking, because that's what optimization parameters always do under uncertainty.

Now here is a counterintuitive piece. Even before the AI era, some of the best companies we know of had already institutionalized this split. Lockheed Martin's Skunk Works is, in our view, the original example of a bet-taking factory. Honda used to have a very strong research organization that operated almost like NASA, free to take technology bets that were far away from automotive chips and far away from the core business. We're not sure how they operate today, but it was a very strongly cited case study 10 to 20 years ago. And in the modern startup world, the examples we keep coming back to are Revolut, Uber, and Rocket Internet.

Revolut, under Nik, has literally done this. Next to a founder's office, there is an explicitly named crazy bets team. Their job is to identify big opportunities and bring them to a point where they can be launched. Uber's organization to launch into new geographies and new products inside existing geographies was, from what we've observed, a bet-taking factory that was resourced with very specific profiles of people and ran on a playbook. And Rocket Internet was probably the OG of this in our part of the world. The bet-taking function was structurally separated from the run-the-business function and resourced with people who were, almost by job description, optimized for bets.

So when operators ask us how to organize for AI compounding, our first answer is almost always: don't make this mistake. Build the bet-taking factory on purpose, separate from the run-the-business teams. Not because they shouldn't collaborate. They should. But because the missions, the metrics, and the optimization parameters are different enough that putting them under the same roof creates target conflict that you can't engineer your way out of.

We don't see this often in startups, by the way. Most of the early stage companies we meet still ask their entire organization to do both. The ones that quietly compound, however, are usually doing some version of this split, even if they don't articulate it that way. So if you're an operator reading this, the first question we'd suggest you sit with is: who in your organization is on run-the-business, and who is on transform-the-business, and is that split clean enough that nobody is being asked to do both?

The takeaway we keep landing on is that AI native isn't just a tooling decision. It's an organizational design decision, and the cleanest design we've observed splits run-the-business from transform-the-business at the team level, not just at the individual contributor level.

Why Commercial AI Engineers Often Outship Traditional Software Engineers

Once you've split the org, the next question becomes who you put inside the bet-taking factory. And this is where Shub and I have noticed something specific in the last few quarters that we think is worth flagging for operators, especially those building startups that are not bottlenecked by deep research.

We've been calling this profile the commercial AI engineer. To be clear, that's a label we've coined to describe what we're seeing, not an established job title. And we want to caveat upfront: this profile is not the right fit if your bottleneck is fundamental deep tech research. If you need to push the frontier of materials science or model architectures, this is not your person. But for the vast majority of startups we look at, where the bottleneck is shipping commercial outcomes against a real market, we keep observing this archetype outperform.

Let me describe what we mean. The commercial AI engineer is, first, a person with a STEM background. Computer science, mathematics, physics, mechanical engineering, electrical engineering. We've even seen nuclear engineering. The undergrad and maybe the first one, two, or three years of professional experience are technical. So far that sounds like a software engineer. Here's where it diverges, and this is the counterintuitive part.

The majority of their professional life was not spent as a software engineer. Instead, the majority was spent in commercial project functions. So if you're looking at someone with three years of experience, ideally most of those three years were commercial. If you're looking at someone with ten years of experience, maybe three years were software engineering and seven were commercial. The longer the career, the larger the ratio of commercial functions should be. We are not describing a software engineer with a sprinkle of commercial exposure. We are describing the inverse: a commercial operator with real STEM foundations.

What do we mean by commercial project functions? In our experience, the high-yield ones are pretty specific. RevOps and growth roles where the person carries a number and ships under pressure. Launcher roles, the kind that exist explicitly inside Uber-style or Revolut-style organizations, where you go into a new geography or a new product and bring it from zero to live. Investment roles, but only the intense ones with extreme high frequency of decisions and revolutions per year. Commercial project manager roles, sometimes. And then the king of this category, in our observation, is the founder. If you can find someone with a STEM or CS background who has actually been a founder, who has lived the high-pressure, high-outcome reality of building, that profile tends to be exceptional inside a bet-taking factory.

Now here is what we've been seeing happen when you put this person to work in an AI-enabled environment. Pre-AI, this person probably wouldn't have shipped much software. Their coding skills, while not absent, weren't going to outpace a dedicated software engineer. But with AI tooling, with tools like Claude Code and the modern coding agents, the same person can ship software and automations at an absolutely insane clip, often more than the non-STEM, non-CS-enabled person can. We're being deliberately rough with the numbers here, because there are exceptions and there are people who have trained themselves very well, but in the cookie-cutter version: this person is now roughly a 70% good coder, plus a 70% good commercial operator, in one body.

That combination is rare. And it matters because when you trace what happens inside startups, you keep running into the same friction points. The interface between engineering and product, the interface between product and commercial, the handoffs, the misalignments, the translation losses. We've all been on the wrong side of those interfaces. Putting commercial AI engineers into your bet-taking factory eliminates both of them, because they sit inside one person. The shipping velocity isn't just a function of them being smart, it's a function of the interfaces themselves disappearing.

We've been observing the results of this for a few quarters now in our portfolio and elsewhere, and the difference is striking. Features that used to take weeks of back-and-forth between three roles now ship in days because one person owns the loop end to end. New product modules get launched without ever leaving the head of one operator. Internal tools get built and deployed without a single ticket being created. And, importantly, the commercial outcome is not lost in translation, because the person shipping the code is also the person who understands what the customer is actually willing to pay for.

This is also where, in our view, the conversation about engineering teams gets reframed entirely. When operators ask us, "But where does this leave the engineering team?", we'd actually push back on the framing. We think more and more of the organization, in the AI native era, is engineering in some functional sense. They're not all engineering in the title-on-a-business-card sense, but they're all shipping, all interacting with code, all participating in the build. The engineering team in the traditional sense becomes much smaller and plays a different role, which we'll come to in the next section.

A practical implication of this for hiring: if you're staffing a bet-taking factory, we'd suggest you stop optimizing only for senior software engineers and start scouting for commercial AI engineers. The talent pool isn't where you think it is. They're sitting inside operating roles at fast-shipping companies, often slightly bored, often underestimated, often great hires for the type of bet-taking environment we're describing. The CV signal is a STEM degree plus a career in high-intensity commercial project work. The interview signal is whether they can take a vague problem, decompose it commercially, and ship a working prototype the same week.

The caveat we want to repeat: this profile is not a substitute for deep tech research talent. If your bottleneck is the model itself, or a hardware breakthrough, or a fundamental scientific question, you need a different kind of engineer. But if your bottleneck is figuring out which features and products actually move the commercial needle in a market, and shipping them faster than the competition, this is the archetype we keep seeing outperform.

The shift we're observing is that the highest-leverage hire for a bet-taking factory might no longer be the senior software engineer, but a commercial operator with a STEM foundation who can use AI tooling to collapse the engineering, product, and commercial functions into one person.

Why The 5 To 15 Year Engineering Cohort Often Struggles With The AI Transition

If we're saying that a lot of shipping moves into the bet-taking factory and into the commercial AI engineer profile, the obvious next question becomes what the traditional engineering function looks like in this world, and who runs it.

Before we answer that, we want to share an observation about the transition itself, because we don't think it's clean, and we don't want to pretend it is. What we keep seeing inside startups that try to AI-enable a traditional engineering team is a particular failure mode. Some engineers adopt AI tooling early and aggressively. Others are slower to adopt. They're not necessarily suspicious or resistant, but they have routines that have worked for them, ways of working they trust, and the transition takes time. So already inside a single team you get a split between fast adopters and slow adopters.

Then, even once everyone is on board, the next problem appears. Every individual now has AI superpowers, and the longer they apply those superpowers, the more they each build their own little archipelago of solutions. Their own routines. Their own tooling preferences. Their own little ways of doing things. From a distance it looks like everyone is shipping, and the business outputs are working in conjunction. But underneath, you're accumulating spaghetti. Different styles, different ways of working, different micro-stacks. The interfaces aren't between the people anymore, they're between everyone's individual archipelagos. And then someone has to tie all of that together, or it eventually breaks.

This is the transition we've been watching unfold across the last six to twelve months in companies trying to AI-enable a previously traditional engineering setup. And it's why, in our current view, you actually shouldn't try to keep a large engineering team that ships a lot in this new world. Instead, the engineering function becomes highly AI-leveraged, but smaller, and its job changes.

We see three jobs for the engineering function, in this design. First, provide tooling. The stack the rest of the organization uses, the coding agents, the deployment pipelines, the standards. Second, provide the interfaces, the APIs, the MCPs, the connective tissue that lets the bet-taking factory plug their work into the rest of the system without rebuilding it every time. Third, take the modules that the bet-taking factory ships and make them boring. Make them dependable. Make them scale. Tie them together so that the archipelago becomes a continent. And the way you increase your odds of pulling that off is by giving the bet-taking factory the standards, the tooling, and the interfaces in the first place, so that what they hand back doesn't need to be rewritten.

If that's the function, then who runs it? This is where we got really interested in some primary data we saw recently. A friend at another VC fund did an inventory across their portfolio. Not a survey, more of an observational pattern-matching exercise across companies that had succeeded and struggled with engineering transitions over the last few months. They shared the TLDR with us. We want to flag that they didn't separate their analysis the way we just did. They didn't talk about the bet-taking factory or the commercial AI engineer; they assumed engineering would always be engineering, which we partially disagree with. But within their lens, their findings were really sharp.

They found two cohorts of engineers who succeed in this new environment, and one cohort that consistently struggles. The cohort that struggles, in their observation, is the one with roughly five to fifteen, maybe eighteen years of experience. The reason they offered makes sense to us. These engineers aren't fresh enough out of university to have absorbed AI as part of their default working style, but they also aren't yet experienced enough to anticipate clearly how an architecture can evolve, how it can be reverse engineered with extreme certainty, and how, once you've reverse engineered it, you can replace your human agents in the architecture with coding agents. They're stuck in the middle. Too senior to be naively AI-native, too junior to have the architectural foresight that compensates.

The first cohort that succeeds, in their observation, is the engineer with twenty plus years of true software engineering experience. Counterintuitive, but we found it really compelling. The reason is that this person has seen so many architectures evolve that they can pattern-match instantly on how a system will need to be structured, where the fault lines will appear, and how to swap human contributors for coding agents at the right layers. And, crucially, this person seems to feel freed by AI rather than threatened by it. There's a quote that stuck with us from one of those interviews: "I'm glad I don't have to manage people anymore. They didn't do anyway what I wanted. I had to do this, that, and the other with the agents. I still have to do that, but I don't have to communicate as much, I don't have to do people management as much. They don't ask me for a bonus. They don't ask me for a performance review. I can have five to eight, ten agents running in parallel. It's the same thing, just better."

We don't think every twenty-plus-year veteran feels that way. But the ones who do are, in our observation, the highest-ROI engineering hires in the market right now. Rare, expensive, hard to find, but extremely valuable.

The second cohort that succeeds is the fresh graduate. Someone coming out of university where AI has already been baked into the stack from day one. There is no transition for them, because they never had a pre-AI working style to abandon. They lack experience, of course, and that comes with its own problems, but for the right kind of work and the right level of supervision, they can be unreasonably productive.

So the gap is the middle. Five to fifteen years, no clean fit. We're not saying every engineer in that range struggles. There are absolutely exceptions, people who've put in the work to retrain themselves and now ship like the twenty-plus-year cohort. But the base rate looks unfavorable in the data we've seen, and operators should at least be aware of that when they're hiring or restructuring.

Now to the persona of the head of engineering. If the function is smaller, more leveraged, and oriented around tooling, interfaces, and consolidation, then the job description changes. We don't think the new head of engineering needs to be a great people manager in the traditional sense, because there are fewer people to manage. What they need are great interpersonal skills, because they have to work in conjunction with everyone outside the engineering function. The function isn't a stratified silo anymore. It's more like a conductor of an orchestra who occasionally has to step in and play a difficult passage themselves.

When we think about how to actually hire this person, Shub and I think the process needs to be inverted from the traditional engineering interview. You wouldn't start with deep technical drills. You'd start with interpersonal and contextual stress tests. Almost a management case study, but with a twist: you constrain them by saying they don't get to be a people manager. They have to operate as an individual contributor inside a team where they're the only engineering person. That sounds like a normal startup definition, but in our experience it's surprisingly hard to find people, especially with twenty-plus years of experience, who can genuinely thrive in that constraint.

The second step would be to expose them to part of the commercial AI engineering function. That's where you test whether information flows freely through them, whether shipping cadence accelerates when they're in the loop, and whether they can handle the rapid repetitions that the bet-taking factory operates on.

Then comes the technical assessment. We think you still need this, especially for someone with twenty years of experience, because you want to verify the depth. The challenge is that very few people inside your team can credibly run this interview. So if you have access to a world-class CTO who can do one, two, or three technical interviews for the advanced candidates as a favor, take them up on it. This step is constrained for many founders, we know. But it's worth fighting for.

And finally, there's an alignment conversation. By the end of the process, you want to see whether the candidate vigorously agrees with the foundational principles we've been describing in this episode. That you don't want an empire builder. That the engineering function is smaller, more leveraged, and oriented around enabling the bet-taking factory. That the world has changed compared to what they experienced for the last twenty years. If they nod politely, that's not enough. If they get visibly excited, that's your person.

We want to be careful here. This is the design we keep seeing work in our portfolio and across the operators we talk to most. We don't think it's the only design that works. There are surely organizations that have figured out variations we haven't observed. And we're skeptical of anyone, including ourselves, claiming to have a final answer in a moment that's still moving as fast as this one. But this is the pattern that keeps coming back when we look at the companies that are actually shipping.

What we keep noticing is that the cleanest hire in this new world is either a twenty-plus-year veteran who's gone all-in on coding agents, or a fresh graduate with native AI fluency, and that the head of engineering's job has shifted from running people to enabling a bet-taking factory through tooling, interfaces, and consolidation.

Our Practical Framework

If we had to compress what Shub and I have been observing into a framework an operator can act on this week, it would have three steps. First, split your organization into run-the-business and transform-the-business, and resource the bet-taking factory deliberately, the way Revolut, Uber, and Rocket Internet have done it. The bet-taking factory takes bets. The run-the-business teams make things dependable. Don't let one absorb the other.

Second, staff the bet-taking factory primarily with commercial AI engineers. STEM or CS background, but the majority of their career in commercial project functions. RevOps, growth, launcher roles, intense investing roles, founder roles. AI tooling brings them to roughly 70% coding capability, and their commercial instincts give them the other 70% that traditional engineers usually don't have. They eliminate the engineering-product-commercial interfaces inside a single human, which is where most of the speed comes from.

Third, hire your head of engineering from one of two pools. Either the twenty-plus-year veteran who has gone all-in on coding agents and is happy to leave traditional people management behind. Or the fresh graduate with native AI fluency, supervised appropriately. We'd be cautious with the five-to-fifteen-year cohort unless you find a clear exception. The job of the head of engineering is to provide tooling, interfaces, and consolidation discipline so that the bet-taking factory's output doesn't fragment into an archipelago.

When we see operators run some version of this framework, we observe three things. Shipping velocity goes up because interfaces inside the org disappear. Compounding becomes possible because bet-taking is institutionalized rather than improvised. And the engineering function stops being the bottleneck and becomes the multiplier. We don't think this is the only path to an AI native organization. But it's the one that keeps showing up in our observations of the companies that are quietly outshipping their peers right now.

You Can Find More Analysis On The Practical Nerds Podcast

Spotify: https://open.spotify.com/show/1Q86tEwusNGwAmRdDqjFL4

Apple: https://podcasts.apple.com/de/podcast/practical-nerds/id1689880222

Foundamental: https://www.foundamental.com/

Subscribe to the Newsletter: https://www.linkedin.com/newsletters/practical-nerds-7180899738613882881/

Companies Mentioned

Uber: https://www.uber.com/

Revolut: https://www.revolut.com/

Rocket Internet: https://www.rocket-internet.com/

Honda Research: https://global.honda/en/RandD/

Airbnb: https://www.airbnb.com/

Salesforce: https://www.salesforce.com/

Netflix: https://www.netflix.com/

Palantir: https://www.palantir.com/

Follow The Practical Nerds

Patric Hellermann: https://www.linkedin.com/in/aecvc/

Shub Bhattacharya: https://www.linkedin.com/in/shubhankar-bhattacharya-a1063a3/